Machine Bias

Concept

Scraping

Face Detection

DCGAN Training

Neither fate nor coincidence. Visualizing structural discrimination and machine bias.

PROJECT

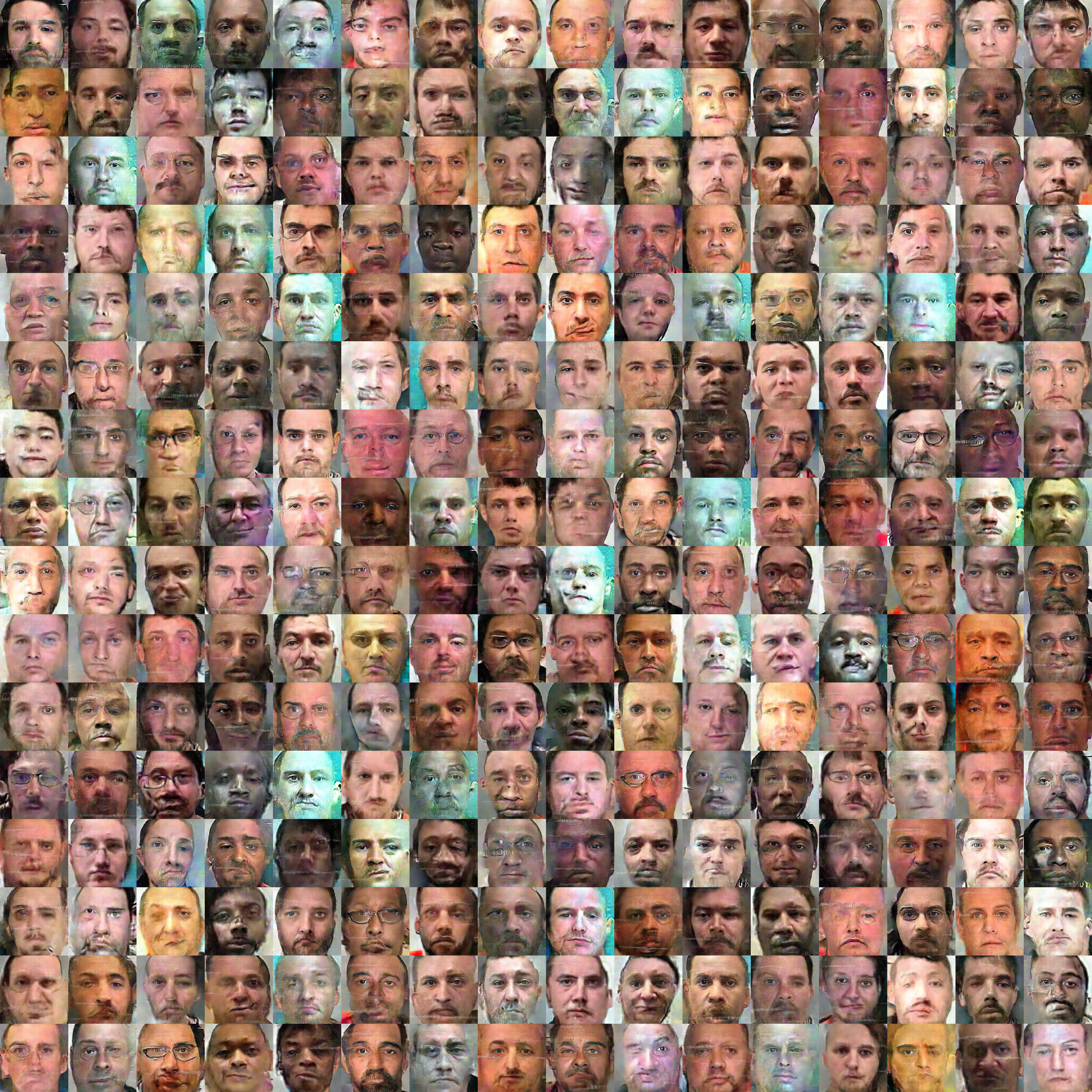

My goal was to make the controversy of the impalpable predictive policing system somewhat tangible. For that I used a machine learning model to generate future faces of prisoners, based on thousands of photographs of US inmates.

Can we tell what future inmates will look like based on the faces of current convicts? If I use a dataset that is based on structural discrimination, it is impossible to create an outcome free of bias – the bias is automatically passed on into the output of the machine learning model.

METHOD

I have scraped, cropped and resized around 3000 photographs of inmates from across all US states and trained a DCGAN model in 600 epochs (here around 24 hours of training) to produce entirely new images based on the dataset. Also here we can ask - What does it mean to create a dataset out of photos stolen from www.mugshots.com, a privately owned website that makes you pay for erasing your own mugshot, name and even addresses? Who can afford to pay, who will stay in this grotesque database forever, and, in this case, will give their face to creating a new generation of prisoners?

BACKGROUND

In more and more countries predictive policing and pretrial risk assessment decisions are handed over to machines and algorithms. How does a machine decide in which district crime is likely to happen, or which convict will be reoffending in the future? Any machine learning decision is based on massive amounts of data transformed into probabilities – but is that data only creating more of the same? What does it mean to use pre-crime and risk assessment in a world where People of Color are facing multitudes of structural discriminations, and White people are given a head start and the benefit of the doubt? Is pre-crime trying to cure the symptoms, but not the cause?

PROJECT

My goal was to make the controversy of the impalpable predictive policing system somewhat tangible. For that I used a machine learning model to generate future faces of prisoners, based on thousands of photographs of US inmates.

Can we tell what future inmates will look like based on the faces of current convicts? If I use a dataset that is based on structural discrimination, it is impossible to create an outcome free of bias – the bias is automatically passed on into the output of the machine learning model.

METHOD

I have scraped, cropped and resized around 3000 photographs of inmates from across all US states and trained a DCGAN model in 600 epochs (here around 24 hours of training) to produce entirely new images based on the dataset. Also here we can ask - What does it mean to create a dataset out of photos stolen from www.mugshots.com, a privately owned website that makes you pay for erasing your own mugshot, name and even addresses? Who can afford to pay, who will stay in this grotesque database forever, and, in this case, will give their face to creating a new generation of prisoners?

BACKGROUND

In more and more countries predictive policing and pretrial risk assessment decisions are handed over to machines and algorithms. How does a machine decide in which district crime is likely to happen, or which convict will be reoffending in the future? Any machine learning decision is based on massive amounts of data transformed into probabilities – but is that data only creating more of the same? What does it mean to use pre-crime and risk assessment in a world where People of Color are facing multitudes of structural discriminations, and White people are given a head start and the benefit of the doubt? Is pre-crime trying to cure the symptoms, but not the cause?

🦄 All Projects

“All that you touch, you change. All that you change, changes you.” — Octavia E. Butler